Desktop-related topics.

Do you have original and sound research results concerning compute and storage clouds, distributed computing, crowdsourcing and human interaction with clouds, utility, green and autonomic computing, scientific computing or big data? (Or do you know people who surely have?)

UCC 2013, the premier conference on these topics with its six co-located workshops, welcomes academic and industrial submissions to advance the state of the art in models, techniques, software and systems. Please consult the Call for Papers and the complementary calls for Tutorials and Industry Track Papers as well as the individual workshop calls for formatting and submission details.

This will be the 6th UCC in a successful conference series. Previous events were held in Shanghai, China (Cloud 2009), Melbourne, Australia (Cloud 2010 & UCC 2011), Chennai, India (UCC 2010), and Chicago, USA (UCC 2012). UCC 2013 happens while cloud providers worldwide add new services and increase utility at a high pace and is therefore of high relevance for both academic and industrial research.

Choice is good for users, but when too much choice becomes a problem, smart helpers are needed to choose the right option. In the world of services, there are often functionally comparable or even equivalent offers with differences only in some aspects such as pricing or fine-print regulations. Especially when using services in an automated way, e.g. for storing data in the cloud underneath a desktop or web application, users could care less about which services they use as long as the functionality is available and selected preferences are honoured (e.g. not storing data outside of the country).

The mentioned smart helpers need a brain to become smart, and this brain needs to be filled with knowledge. A good way to represent this knowledge is through ontologies. Today marks the launch of the consolidated WSMO4IoS ontology concept collection which contains this knowledge specially for contemporary web services and cloud providers. While still in its infancy, additions will happen quickly over the next weeks.

One thing which bugged me when working with ontologies was the lack of a decent editor. There are big Java behemoths available, which certainly can do magic for each angle and corner allowed by the ontology language specifications, but having a small tool available would be a nice thing to have. And voilà, the idea for the wsmo4ios-editor was born. It's written in PyQt and currently, while still far from being functional, 8.3 kB in size (plus some WSML-to-Python code which could easily be generated on the fly). The two screenshots show the initial selection of a domain for which a service description should be created, and then the editor view with dynamically loaded tabs for each ontology, containing the relevant concepts, relations (hierarchical only) and units.

The preliminary editor code can be found in the kde-cloudstorage git directory. It is certainly a low-priority project but a nice addition, especially considering the planned ability to submit service descriptions directly to a registry or marketplace through which service consumers can then select the most suitable offer. Know the service - with WSMO4IoS :)

How can desktop users assume command over their data in the cloud? This follow-up to the previous blog entry on a proposed optimal cloud storage solution in KDE concentrates on the smaller integration pieces which need to be combined in the right way to achieve the full potential. Again, the cloud storage in use is assumed to be dispersed among several storage providers with the NubiSave controller as opposed to potentially unsafe single-provider setups. All sources are available from the kde-cloudstorage git directory until they may eventually find a more convenient location.

The cloud storage integration gives users a painless transfer of their data into the world of online file and blob stores. Whatever the users have paid for or received for free, shall be intelligently integrated this way. First of all, the storage location naturally integrates with the network folder view. One click brings the content entrusted to the cloud to the user's attention. Likewise, this icon is also available in the file open/save dialogues, fusing the local and remote file management paradigms.

Having a file stored either locally or in the cloud is often undesirable. Instead, a file should be available locally and in the cloud at the same time, with the same contents, through some magic synchronisation. In the screenshot below, the user (who is apparently a friend of Dolphin) clicks on a file or directory and wants it to be synchronised with the cloud in order to access it from other devices or to get instant backups after modifications. The alternative menu point would move the data completely but leave a symlink in the local filesystem so the data being in the cloud will not change the user's workflow except for perhaps sluggish file operations on cold caches.

What happens is that instead of copying right away, the synchronisation link is registered with a nifty command-line tool called syncme (interesting even for users who mostly refrain from integrated desktops). From that point on, a daemon running alongside this tool synchronises the file or directory on demand. The screenshot below shows the progress bar representing the incremental synchronisation. The rsync-kde tool is typically hidden behind the service menu as well.

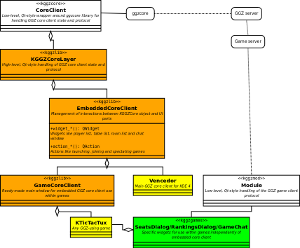

The current KDE cloud storage integration architecture is shown in the diagram below. Please note that it is quite flexible and modular. Most of the tools can be left out and fallbacks will automatically be picked, naturally coupled with a degraded user experience. In the worst case, a one-time full copy of the selected files is performed without any visual notification of what is going on - not quite what you want, so for the best impression, install all tools together.

Naturally, quite a few ingredients are missing from this picture, but rest assured that they're being worked on. In particular, how can the user select, configure and assemble cloud storage providers with as few clicks and hassles as possible? This will be a topic for a follow-up post. A second interesting point is that ownCloud can currently be used as a backend storage provider to NubiSave, but could theoretically also serve as the entry point, e.g. by running on a router and offloading all storage to providers managed through one of its applications. This is another topic for a follow-up post...

What is the free desktop ecosystem's answer to both the growing potential and the growing threat from the cloudmania? Unfortunately, there is not much to it yet. My continuous motivation to change this can best be described by this excerpt from an abstract of a DS'11 submission:

The use of online services, social networks and cloud computing offerings has become increasingly ubiquitous in recent years, to the point where a lot of users entrust most of their private data to such services. Still, the free desktop architectures have not yet addressed the challenges arising from this trend. In particular, users are given little systematic control over the selection of service providers and use of services. We propose, from an applied research perspective, a non-conclusive but still inspiring set of desktop extension concepts and implemented extensions which allow for more user-centric service and cloud usage.

When these lines were written, we did already have the platform-level answers, but not yet the right tools to build a concrete architecture for everyday use. The situation has recently improved with results pointing into the right direction. This post describes such a tool for optimal cloud storage in particular (optimal compute clouds are still ahead of us).

Enter NubiSave, our award-winning optimal cloud storage controller.

It evaluates formal cloud resource descriptions with some SQL/XML schema behind them plus some ontology magic such as constraints and axioms,

together with user-defined optimality criteria (i.e. security vs. cost vs. speed).

Then, it uses these to create the optimal set of resources and spreads data entering through a FUSE-J folder among the resources, scheduling again

according to optimality criteria. Even if encryption is omitted or brute-forced, no single cloud provider gets access to the file contents. Furthermore, transmission and retention

quality is increased compared to legacy single-provider approaches. This puts the user into command and the provider into the backseat. Thanks to redundancy, insubordinate providers

can be dismissed by the click of a button :-)

Enter NubiSave, our award-winning optimal cloud storage controller.

It evaluates formal cloud resource descriptions with some SQL/XML schema behind them plus some ontology magic such as constraints and axioms,

together with user-defined optimality criteria (i.e. security vs. cost vs. speed).

Then, it uses these to create the optimal set of resources and spreads data entering through a FUSE-J folder among the resources, scheduling again

according to optimality criteria. Even if encryption is omitted or brute-forced, no single cloud provider gets access to the file contents. Furthermore, transmission and retention

quality is increased compared to legacy single-provider approaches. This puts the user into command and the provider into the backseat. Thanks to redundancy, insubordinate providers

can be dismissed by the click of a button :-)

Going from proof-of-concept prototypes to usable applications requires some programming and maintenance effort. This is typically not directly on our agenda, but in selected cases we

choose this route for increasing the impact through brave adopters. The recently started PyQt GUI shown here gives a good impression on how desktop users will be able to

mix and match suitable resource service providers.

This tool will soon be combined with the allocation GUI which interestingly enough is also written in PyQt for real-time control of what is going

on between the desktop and the cloud.

Going from proof-of-concept prototypes to usable applications requires some programming and maintenance effort. This is typically not directly on our agenda, but in selected cases we

choose this route for increasing the impact through brave adopters. The recently started PyQt GUI shown here gives a good impression on how desktop users will be able to

mix and match suitable resource service providers.

This tool will soon be combined with the allocation GUI which interestingly enough is also written in PyQt for real-time control of what is going

on between the desktop and the cloud.

Of course, there are still plenty of open issues, especially concerning automation for the masses - how many GB of free storage can we get today? Without effort to set it up? But the potential of this solution over deprecated single vendor relationships is pretty clear. If people want RAIDs for local storage, why don't they go for RAICs and RAOCs (Redundant Arrays of Optimal Cloud storage) already? In fact, a fairly large company has shown significant interest in this work, and clearly we hope on more companies securing their souvereignty in the cloud through technologies such as ours. And we hope on desktops offering dead simple tools to administer all of this, and complementary efforts such as ownCloud to add fancy web-based sharing capabilities.

Making optimal use of externally provided resources in the cloud is a good first step (and a necessity to preserve leeway in the cloud age), but being able to collaboratively participate in community/volunteer cloud resource provisioning is the logical path going beyond just the consumption. We are working on a community-driven cloud resource spotmarket for interconnected personal clouds and on a sharing tool to realise this vision. The market could offer GHNS feeds for integration into the Internet of Services desktop. I'm glad to announce that in addition to German Ministry of Economics and EU funding, for the entire year of 2012 we were able to acquire funds from the Brazilian National Council for Scientific and Technological Development (CNPq). This means that next year I will migrate between hemispheres a couple times to work with a team of talented people on scalable cloud resource delivery to YOUR desktop. Hopefully, more people from the community are interested to join our efforts, especially for desktop and distribution integration!

As a researcher, I'm primarily less concerned with software development and instead more with looking at things which exist, see how they make sense, and if they help me achieving an innovation which makes certain problems go away. When the problem is then still not fully solved, and this fact blends in with free weekend time, my computers are still available for software development :-)

Today, I've been looking at how easy it would be to support the Open Collaboration Services as social programmable web interface to the GGZ Gaming Zone Community. As a result, there is some web service API available as optional adapter to GGZ Community, and an instance is running online. It supports the CONFIG service, and partially supports the PERSON and FRIEND services.

These were the easy parts. Anything fancy will require improvements to the OCS specification (see my suggestions sent to the mailing list) and further work on social gaming from the GGZ side. The latter will certainly also help with efforts such as gaming freedom.

Some news from the games sprint which is now fully staffed (10 people) and running at full speed until Sunday. We've just completed our round of lightning talks with inspiring technology-laden talks given by Stefan (Tagaro as libkdegames successor), Leinir (Gluon) and myself (GGZ). This has brought forward some interesting integration and code de-duplication points to combine the gaming cloud, the social desktop, the game development stacks and game centres.

For my own agenda aligned with the wish of being able to find more time for software development again, I've pushed onto my TODO list:

- Moving remaining KGGZ libraries from GGZ SVN into KDE Games SVN, to prepare them for integration with Tagaro

- Look at Gluon more closely to see how to accomodate its future social/multiplayer needs

- OCS provider from the GGZ Community Web Services API, so that Gluon players can talk to GGZ communities

- Extending the Gluon KDE player with KGGZ libraries

More information about the goals, lightning talks and so on can be found on the wiki page.

Inspired by a feature request for more complex filtering rules, Commitfilter is now able to import CIA XML rulesets. This makes it possible to get all mails related to translations, for example, without auto-committed Scripty mails. You can also subscribe to all commits by myself except when I'm working on playground stuff. Note that the CIA configuration is currently separate from the traditional path/author configuration. It is also not reflected in the live filtering previews. One option would be switch to this rule language entirely and thus be able to export from Commitfilter to CIA again. Let's see how many people make use of this feature before jumping to the next one. Send feedback to kde-services-devel.

In related news, GHNS is being picked up by GNOME developers again. Should give us some interesting material to talk about at Akademy/GCDS.

Historically, the GGZ approach to consistent multiplayer games on the desktop was to offload all the non-game specific tasks like login, chat and game start into special applications called core clients. After a while, this turned out to be a terrific idea but it contradicted the behaviour of most players. Their usual workflow is to start the game first and then switch to online mode rather than the other way around. For some time now, all the necessary components to allow this alternative path have been available for Gtk+ game integration, but no such widget framework has existed for KDE. (In all fairness, the first game ever with embedded core client support was Widelands which uses SDL.)

This is now changing: With the introduction of the classes GameCoreClient and KGGZCoreLayer, games can easily integrate either an off-the-shelf multiplayer control panel with a list of players and running games, or pieces thereof through the lower-level EmbeddedCoreClient class. Both widgets and standard actions like connect to game as spectator or launch new game are supported by this framework. A number of technological goodies like social gaming are thus also becoming available with only few lines of code in the game clients.

Having a strong service-oriented background, my interest in reusable software is rather high. Ideally, most parts in service and component development would be reusable with well-defined interfaces and loose coupling.

In KDE land, we're now forging plans for 4.3 features and are faced with the undesirable dilemma of growing social software on the one hand (good!), but horrible widget inconsistencies on the other hand (not good!). The area of chat widgets is such an inpatient in need of consistency-increasing medication. Chat widgets are used in (drumroll...) chat applications, but also in online games, collaborative text editors and office applications, and just about anything else which has humans connected to it. In short, we do have a need for a chat widget set shared at the kdelibs level to increase consistency and cut down the number of redundant codelines in the already infinitely growing codebase. SoC candidates, anyone?

However, the search for a suitable candidate with a reasonable set of features is not easy. Let's follow good habits here by looking at the candidates:

KGGZ (part of the KDE3-based ggz-kde-client package) uses its own chat widget based on Qt classes. It offers nickname autocompletion, history, colouring and everything including the kitchensync, but is clearly protocol-specific and would need porting anyway.

Vencedor (heading towards kdegames) uses the KChat class from libkdegames. It is rather old, not maintained, doesn't look fancy and is domain-specific for games, especially two-player games.

KBattleship, despite being part of kdegames, uses its own chat widget! It's rather small and not nice or featureful, but at least it's generic.

Kopete uses its own chat widget which is rather flexible and full of cross-protocol features, including spell checkers and whatnot. According to its developers it should be possible to make it reusable by relying on the libkopete interface, but so far this still resides in kdenetwork and won't move due to ABI compatibility concerns. There are some interesting ideas currently being discussed, though, so it could still be possible.

Quassel uses its own chat widget. This IRC client receives a lot of developer and user attention at the moment, but according to its developers is not easily reusable.

Let's also follow really good habits by looking at the friendly competition:

Gobby uses its own chat widget based on GTKmm which is clearly Gobby-specific. It provides history, but no nickname autocompletion or emoticons.

GGZ-Gtk uses XText, the quite reusable XChat widget, which has almost all of the features one needs. However, the last time we merged the latest XText codebase, a lot of compiler warnings and some errors were introduced and have been there ever since. Not sure if XText is still maintained at all.

GGZ-Java uses its own chat widget based on AWT/Swing which is clearly GGZ-specific. The features are certainly sufficient for online gaming, and it's stylable with CSS. And, oops, I just spotted a bug where it assumes the name of the chatbot to always equal "MegaGrub".

To summarise, most people like to reinvent the wheel, and despite having been one of them in the past I'd like to see some consolidated generic chat widget becoming available to any KDE application.

Last Friday, January 30, Dominik Haumann and me went from Darmstadt to Frankfurt am Main to join the KDE 4.2 Release Party in the Brotfabrik. It wasn't the biggest such event, but had a nice mix of users and contributors, of locals and guests.

I had my N810 with me, which was unfortunately out of battery that evening, as well as a similarly-spec'd subnotebook which proves that the latest KDE compiles and runs just fine on a 400 MHz processor with 256 MB RAM. Not fast as light, but a lot more usable than it might appear at first.

The photo below shows, in clock-wise order starting from the lower left: Meinhard, Andreas (openSUSE website guy), Manuel (Quassel IRC client guy), Claudia (e.V. office), Jens-Michael (Marble hacker), Isabel (Linux-something, sorry can't decipher, and not visible on this photo), Andreas (a KDE-Win32 user), Thomas (DE-CIX), Lukas (Linux Lancers captain), Alex (of CMake fame), Dominik (of Kate fame), myself and Michael (KHotKeys). Aren't they a great bunch of people whom you'd entrust the fate of your desktop? (At least compared to making informed decisions about buying cameras which handle perspectives correctly, ahem.)